Mendeley Data vs. Netflix Data November 2, 2010

Posted by Andre Vellino in Citation, Collaborative filtering, Data, Data Mining, Digital library, Recommender, Recommender service.9 comments

Mendeley, the on-line reference management software and social networking site for science researchers has generously offered up a reference dataset with which developers and researchers can conduct experiments on recommender systems. This release of data is their reply to the DataTel Challenge put forth at the 2010 ACM Recommender System Conference in Barcelona.

The paper published by computer scientists at Mendeley, which accompanies the dataset (bibliographic reference and full PDF), describes the dataset as containing boolean ratings (read / unread or starred / unstarred) for about 50,000 (anonymized) users and references to about 4.8M articles (also anonymized), 3.6M of which are unique.

I was gratified to note that this is almost exactly the user-item ratio (1:100) that I indicated in my poster at ASIS&T2010 was typically the cause of the data sparsity problem for recommenders in digital libraries. If we measure the sparseness of a dataset by the number of edges in the bipartite user-item graph divided by the total number of possible edges, Mendeley gives 2.66E-05. Compared with the sparsity of Neflix – 1.18E-02 – that’s a difference of 3 orders of magnitude!

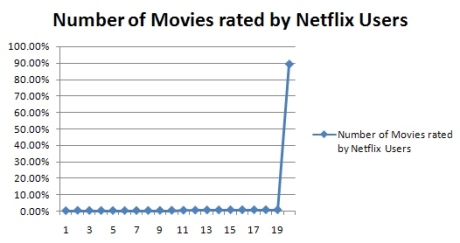

But raw sparsity is not all that matters. The number of users per movie is much more evenly distributed in Netflix than the number of readers per article in Mendeley, i.e. the user-item graph in Netflix is more connected (in the sense that the probability of creating a disconnected graph by deleting a random edge is much lower).

In the Mendeley data, out of the 3,652286 unique articles, 3,055546 (83.6%) were referenced by only 1 user and 378,114 were referenced by only 2 users. Less than 6% of the articles referenced were referenced by 3 or more users. [The most frequently referenced article was referenced 19,450 times!]

Compared with the Netflix dataset (which contains over ~100M ratings from ~480K users on ~17k titles) over 89% of the movies in the Netflix data had been rated by 20 or more users. (See this blog post for more aggregate statistics on Netflix data.)

I think that user or item similarity measures aren’t going to work well with the kind of distribution we find in Mendeley data. Some additional information such as article citation data or some content attribute such as the categories to which the articles belong is going to be needed to get any kind of reasonable accuracy from a recommender system.

Or, it could be that some method like the heat-dissipation technique introduced by physicists in the paper “Solving the apparent diversity-accuracydilemma of recommender systems” published in the Proceedings of the National Academy of Sciences (PNAS) could work on such a sparse and loosely connected dataset. The authors claim that this approach works especially well for sparse bipartite graphs (with no ratings information). We’ll have to try and see.

Ex Libris ‘bX’ Recommender Promo Video October 5, 2010

Posted by Andre Vellino in Collaborative filtering, Recommender.2 comments

I stumbled across this Ex Libris promo video for its ‘bX’ recommender yesterday. Having done quite a few of these use-case demo scenarios to “show the value”, I appreciate how hard it is to pitch a relatively complex idea in straight-forward terms. I think it does a pretty good job too, notwithstanding the slightly over-the-top-happiness tenor of the whole thing.

I stumbled across this Ex Libris promo video for its ‘bX’ recommender yesterday. Having done quite a few of these use-case demo scenarios to “show the value”, I appreciate how hard it is to pitch a relatively complex idea in straight-forward terms. I think it does a pretty good job too, notwithstanding the slightly over-the-top-happiness tenor of the whole thing.

At the risk of repeating myself, though, there’s one thing that the video glosses over. SFX logs are, effectively, click-logs and clicks have two sources: search engine results and ‘bX’ recommendations themselves. Hence ‘bX’ recommendations are more likely to be “semantically homogenous” (although less so than pure search results) because the data they derive from is biased by search-engine ranking. The proportion of SFX trafic that is generated by the recommender itself further narrows the semantic diversity of recommendations.

Are User-Based Recommenders Biased by Search Engine Ranking? September 28, 2010

Posted by Andre Vellino in Collaborative filtering, Recommender, Recommender service, Search, Semantics.2 comments

I have a hypothesis (first emitted here) that I would like to test with data from query logs: user-based recommenders – such as the ‘bX’ recommender for journal articles – are biased by search-engine language models and ranking algorithms.

I have a hypothesis (first emitted here) that I would like to test with data from query logs: user-based recommenders – such as the ‘bX’ recommender for journal articles – are biased by search-engine language models and ranking algorithms.

Let’s say you are looking for “multiple sclerosis” and you enter those terms as a search query. Some of the articles that were presented to you from the search results will likely be relevant and you download a few of the articles during your session. This may be followed by another, semantically germane query that yeilds more article downloads. As a consequence, the usage-log (e.g. the SFX log used by ‘bX’) is going to register these articles as having been “co-downloaded”. Which is natural enough.

But if this happens a lot, then a collaborative filtering recommender is going to generate recommendations that are biased by the ranking algorithm and language model that produced the search-result ranking: even by PageRank, if you’re using Google.

In contrast, a citation-based (i.e. author-centric) recommender (such as Sarkanto) will likely yield more semantically diverse recommendations because co-citations will have (we hope!) originated from deeper semantic relations (i.e. non-obvious but meaningful connections between the items cited in the bibliography).

Sarkanto Scientific Search September 13, 2010

Posted by Andre Vellino in Collaborative filtering, Digital library, Information retrieval, Recommender, Recommender service, Search.add a comment

A few weeks ago I finished deploying a version of a collaborative recommender system that uses only article citations as a basis for recommending journal articles. This tool allows you to search ~ 7 million STM (Scientific Technical and Medical) articles up to Dec. 2009 and to compare citation-base recommendations (using the Synthese recommender) with recommendations generated by ‘bX’ (a user-based collaborative recommender from Ex Libris). You can try the Sarkanto demo and read more about how ‘bX’ and Sarkanto compare.

A few weeks ago I finished deploying a version of a collaborative recommender system that uses only article citations as a basis for recommending journal articles. This tool allows you to search ~ 7 million STM (Scientific Technical and Medical) articles up to Dec. 2009 and to compare citation-base recommendations (using the Synthese recommender) with recommendations generated by ‘bX’ (a user-based collaborative recommender from Ex Libris). You can try the Sarkanto demo and read more about how ‘bX’ and Sarkanto compare.

Note that I’m also using this implementation to experiment with Google Translate API and the Microsoft Translator to do both query expansion into the other Canadian Official Language and to translate various bibliographic fields upon returning search results.

CiteUlike Recommender September 28, 2009

Posted by Andre Vellino in Collaborative filtering, Recommender, Recommender service.2 comments

The recommender system that Toine Bogers experimented on a few years ago with CiteUlike data and which is the subject of a very interesting poster given at Recomender Systems 2008 is now on-line at CiteUlike.

The recommender system that Toine Bogers experimented on a few years ago with CiteUlike data and which is the subject of a very interesting poster given at Recomender Systems 2008 is now on-line at CiteUlike.

Paradoxically, my personal CiteUlike library of (only) 22 articles (mostly on recommender systems) isn’t sufficient to generate any recommendations. Probably there aren’t enough people who have similar collections.

Evaluating Article Recommenders July 23, 2009

Posted by Andre Vellino in Collaborative filtering, Recommender.4 comments

In his March article for CACM, Greg Linden opines that RMSE (Root Mean Square Error) and similar measures of recommender acuracy are not necessarily the best ways to assess their value to users. He suggests that Top-N measures may be preferable if the problem is to predict what someone will really like.

In his March article for CACM, Greg Linden opines that RMSE (Root Mean Square Error) and similar measures of recommender acuracy are not necessarily the best ways to assess their value to users. He suggests that Top-N measures may be preferable if the problem is to predict what someone will really like.

“A recommender that does a good job predicting across all movies might not do the best job predicting the TopN movies. RMSE equally penalizes errors on movies you do not care about seeing as it does errors on great movies, but perhaps what we really care about is minimizing the error when predicting great movies.”

This problem is compounded when it isn’t even possible to measure errors of any kind. Suppose you have an item-based recommender for journal articles in a digital library and recommendations are restricted to items in the collection owned by the library. These recommendations are then restricted to a certain set which may be incommensurable with recommendations generated from a different collection. So any quality measure would depend on the size of the collection.

How then would one go about evaluating recommendations in this circumstance? One way is for an expert to inspect the results and judge them for relevance or quality. Another is to measure some meta-properties of the recommendations, such as their semantic distance from one another or from the item they are being recommended from. At least y0u would be able to say that one recommender offers greater novelty or diversity than another.

This is the kind of approach taken by Òscar Celma and Perfecto Herrera in a paper delivered at Recommender Systems 2008. They concluded that content-based recommendations for music that are less biased by popularity (i.e. more biased toward content-similarity) produced less novelty in recommendations and also less user-satisfaction.

While music listeners may appreciate novelty and diversity, my expectation is that users of recommenders for scholarly articles actually want something closer to “more like this” (content similarity) than “other users who looked at this looked that” (collaborative filtering).

At least that’s the conclusion (not yet scientifically corroborated) that I came to when I compared a usage-only recommender (‘bX’ from Ex Libris) to a citation-only recommender for scholarly articles (Synthese). At first blush ‘bX’ produces more “interesting” recommendations (greater diversity) whereas Synthese (in citation-only mode anyway) generates more “similar” recommendations.

Perhaps what the user needs is both kinds of recommenders – depending on thier information retrieval needs.