The End of Files December 8, 2012

Posted by Andre Vellino in Data, Digital library.add a comment

A few weeks ago, I boldly predicted in my class on copyright that the computer file was as doomed in annals of history as the piano roll (the last of which was printed in 2008 – See this documentary video on YouTube on how they are made and copied!)

This is a slightly different prediction than the one made by the Economist in 2005: Death to Folders. Their argument was that folders as a method of organizing files was obsolete and that search, tagging and “smart folders” were going to change everything. My assertion is the very notion of a file – these things that are copied, edited, executed by computers – will eventually disappear (to the end-user, anyway.)

The path to the “end of files” is more than just a question of masking the underlying data-representation to the user. It is true that Apps (as designed for mobile devices) have begun to do that as a convenient way of hiding the details of a file from the user – be it an application file or a document file. The reason that Apps (generally) contain within them the (references to) data-items (i.e. files) that they need, particularly if the information is stored in the cloud, is to provide a Digital Rights Management scheme. Which no doubt why this App model is slowly creeping its way from mobile devices to mainstream laptops and desktops (viz. Mac OS Mountain Lion and Windows 8).

But this is just the beginning. There’s going to be a paradigm shift (a perfectly fine phrase, when it’s used correctly!) in our mental representations of computing objects and it is going to be more profound than merely masking the existence of the underlying representation. I think the new paradigm that will replace “file” is going to be: “the set of information items and interfaces that are needed to perform some action the current use-context”.

Consider as an example of this trend towards the new paradigm, Wolfram’s Computable Document Format. In this model, documents are created by dynamically assembling components from different places and performing computations on them. In this model there are distributed, raw information components – data mostly – that are assembled in the application and don’t correspond to a “file” at all. Or consider information mashups like Google Maps with restaurant reviews and recommendations are generated as a function of search-history, location, and user-identity. These “content-bundles”, for want of a better phrase, are definitely not files or documents but, from the end-user’s point of view, they are also indistinguishable from them.

Even, MS Word DocX “files” are instances of this new model. The Open Document XML file format is a standardized data-structure: XML components bound together in a zip file. Imagine de-regimenting this convention a little and what constitutes a “document” could change quite significantly.

Conventional, static files will continue to exist for some time and version control systems will continue to provide change management services to what we now know as “files”. But I predict that my grand children won’t know what a file is – and won’t need to. The procedural instructions required for assembling information-packages out of components, including the digital rights constraints that govern them, will eventually dominate the world of consumable digital content to the point where the idea of a file will be obsolete.

CISTI Sciverse Gadget App December 13, 2011

Posted by Andre Vellino in CISTI, Digital library, General, Information retrieval, Open Access.add a comment

Betwixt the jigs and the reels, and with the help of several people at CISTI and Elsevier, I developed a (beta) Sciverse gadget that gives searchers and researchers a window on CISTI’s electonic collection by taking the search term entered in Elsevier Hub and providing them with CISTI’s search results from a database of over 20 million journal articles.

Betwixt the jigs and the reels, and with the help of several people at CISTI and Elsevier, I developed a (beta) Sciverse gadget that gives searchers and researchers a window on CISTI’s electonic collection by taking the search term entered in Elsevier Hub and providing them with CISTI’s search results from a database of over 20 million journal articles.

Next year, I plan follow up with another Sciverse gadget for my citation-based recommender that uses the full power of Elsevier’s API into its collection content.

I want to commend all and sundry at Sciverse Applications for this initiative. Opening up bibligraphic data and providing developers with a developer platform (a customized version of Google’s OpenSocial platform) is exactly the right kind of thing to do both to benefit third parties (they get access to anotherwise closed and proprietary data) and to enhance their own search and discover environment.

There are, already, several advanced and interesting applications on Sciverse. My favourites are: Altmetric (winner of the Science Challenge prize – see YouTube demo video below) NextBio’s Prolific Authors and Elsevier’s Table Download.

And there will be more to come. An open marketplace like this where the principles of variation and natural selection can operate will, I predict, make for a richer diversity of useful search and discovery tools than any single organization can develop on its own.

Mendeley Data vs. Netflix Data November 2, 2010

Posted by Andre Vellino in Citation, Collaborative filtering, Data, Data Mining, Digital library, Recommender, Recommender service.9 comments

Mendeley, the on-line reference management software and social networking site for science researchers has generously offered up a reference dataset with which developers and researchers can conduct experiments on recommender systems. This release of data is their reply to the DataTel Challenge put forth at the 2010 ACM Recommender System Conference in Barcelona.

The paper published by computer scientists at Mendeley, which accompanies the dataset (bibliographic reference and full PDF), describes the dataset as containing boolean ratings (read / unread or starred / unstarred) for about 50,000 (anonymized) users and references to about 4.8M articles (also anonymized), 3.6M of which are unique.

I was gratified to note that this is almost exactly the user-item ratio (1:100) that I indicated in my poster at ASIS&T2010 was typically the cause of the data sparsity problem for recommenders in digital libraries. If we measure the sparseness of a dataset by the number of edges in the bipartite user-item graph divided by the total number of possible edges, Mendeley gives 2.66E-05. Compared with the sparsity of Neflix – 1.18E-02 – that’s a difference of 3 orders of magnitude!

But raw sparsity is not all that matters. The number of users per movie is much more evenly distributed in Netflix than the number of readers per article in Mendeley, i.e. the user-item graph in Netflix is more connected (in the sense that the probability of creating a disconnected graph by deleting a random edge is much lower).

In the Mendeley data, out of the 3,652286 unique articles, 3,055546 (83.6%) were referenced by only 1 user and 378,114 were referenced by only 2 users. Less than 6% of the articles referenced were referenced by 3 or more users. [The most frequently referenced article was referenced 19,450 times!]

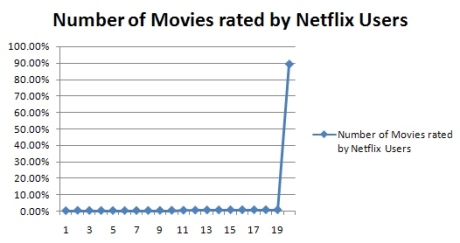

Compared with the Netflix dataset (which contains over ~100M ratings from ~480K users on ~17k titles) over 89% of the movies in the Netflix data had been rated by 20 or more users. (See this blog post for more aggregate statistics on Netflix data.)

I think that user or item similarity measures aren’t going to work well with the kind of distribution we find in Mendeley data. Some additional information such as article citation data or some content attribute such as the categories to which the articles belong is going to be needed to get any kind of reasonable accuracy from a recommender system.

Or, it could be that some method like the heat-dissipation technique introduced by physicists in the paper “Solving the apparent diversity-accuracydilemma of recommender systems” published in the Proceedings of the National Academy of Sciences (PNAS) could work on such a sparse and loosely connected dataset. The authors claim that this approach works especially well for sparse bipartite graphs (with no ratings information). We’ll have to try and see.

Sarkanto Scientific Search September 13, 2010

Posted by Andre Vellino in Collaborative filtering, Digital library, Information retrieval, Recommender, Recommender service, Search.add a comment

A few weeks ago I finished deploying a version of a collaborative recommender system that uses only article citations as a basis for recommending journal articles. This tool allows you to search ~ 7 million STM (Scientific Technical and Medical) articles up to Dec. 2009 and to compare citation-base recommendations (using the Synthese recommender) with recommendations generated by ‘bX’ (a user-based collaborative recommender from Ex Libris). You can try the Sarkanto demo and read more about how ‘bX’ and Sarkanto compare.

A few weeks ago I finished deploying a version of a collaborative recommender system that uses only article citations as a basis for recommending journal articles. This tool allows you to search ~ 7 million STM (Scientific Technical and Medical) articles up to Dec. 2009 and to compare citation-base recommendations (using the Synthese recommender) with recommendations generated by ‘bX’ (a user-based collaborative recommender from Ex Libris). You can try the Sarkanto demo and read more about how ‘bX’ and Sarkanto compare.

Note that I’m also using this implementation to experiment with Google Translate API and the Microsoft Translator to do both query expansion into the other Canadian Official Language and to translate various bibliographic fields upon returning search results.

Data Archiving May 7, 2010

Posted by Andre Vellino in Data, Digital library, Information retrieval.5 comments

In a previous post, I was suggesting that the text from publications and the data DOIs that are referenced in them could be used as metadata for the datasets. Others have thought about addressing this issue too. For instance, The Edinburgh Research Archive’s StORe project has as a goal:

…. to address the area of interactions between output repositories of research publications and source repositories of primary research data.

However, linking conventional research output (publications) with the data they depend on may be more of a challenge than it ought to be. According to this paper on Constructing Data Curation Profiles, the authors discovered that very few scientists are motivated to deposit their datasets, let alone make them discoverable. What ought to be a technological no-brainer may be a cultural challenge in some quarters.

A few other interesting things emerge from looking at a variety of subject-dependant “Data Curation Profiles“:

- Scientists highly value the ability to view usage statistics (of their data).

- Data and the tools to create / view / analyse them cannot be separated.

- There are often lots of links between the data-set itself and (informative) text (e.g. documentation) that are not just the publication that it leads to.

- Data, much more so than publications, are heterogeneous – in format, structure and location as well as in the populations that generate them.

- How the data was obtained is key to understanding its significance.

This last point has a philosophical generalization: data is never “theory-free”. Why the data means anything has to be related to the theory or hypothesis that it was meant to test or support. The corrolary from an information retrieval point of view is that the theoretical underpinnings of datasets must be tied (via text) to the datasets themselves.

Hence we need the lab notes, the prior literature and the context for which data is collected (all sources of indexable meta-data, I might add). Simply having zip files with numbers in them stored in a trusted digital repository isn’t going to be of much use – even if there is a lot of bibliographic metadata attached to it.

I hope I’m not belabouring the point.

P.S. I really like the Digital Curation Center’s motto: “because good research needs good data”. Brilliant slogan! One day when data is getting all the funding, I trust that “because good data needs good research” will find its way into a University’s motto.

Google Books on Charlie Rose March 8, 2010

Posted by Andre Vellino in CISTI, Digital library, General, Open Access, Search.add a comment

I found this conversation about the “Google Books” library very interesting. It is was between Robert Darnton (professor of American cultural history at Harvard and Director of the Harvard University Library), David Drummond (Chief Legal Officer at Google), bestselling author James Gleick and Charlie Rose (from PBS) last night.

I was especially pleased to see Prof. Darnton insist on the need to guarantee “the public interest”. Only he seemed to have the long view, though.